Data engineers are in demand. According to research, around 284,100 new data engineering jobs are projected over the next decade.

This is unsurprising because data is an important component of nearly every industry. Technological advancements have reduced the cost of data storage, enabling more organizations to use data for making informed decisions and staying competitive.

To become a data engineer, you need solid programming and database management skills. Knowledge of data warehousing and visualization is also essential.

Here’s what you need to know about pursuing a data engineering career.

What is the Purpose of Data Engineering?

Nowadays, companies have to carefully review various types of data from multiple sources to make the best business decisions. Data engineering streamlines this process by allowing data consumers to inspect all data in a quick, reliable, and secure way.

That being said, the main purpose of data engineering includes the following:

- Centralize data via different data integration tools

- Safeguard enterprises from cyber attacks

- Enhance information security

- Provide the best practices to improve the overall product development cycle

What is a Data Engineer?

A data engineer designs, builds, and optimizes systems for data collection, storage, access, and analytics at scale. They build data pipelines that transform raw data into usable formats for data scientists, analysts, and an organization’s decision-makers who rely on data.

The primary duty of a data engineer is to ensure data is available, accessible, and secure for those who need it.

How Does Data Engineering Differ From Data Science And Data Analytics?

It is normal to get confused about how data science and data analysis differ from data engineering. Many skills overlap, and a data engineer in a small organization may have to take on both roles. But here is how these three positions differ.

A data engineer is the intermediary between a data scientist and a data analyst. They are responsible for pairing and preparing data for analytical or operational purposes. This role requires significant experience in the construction, development, and maintenance of data architecture. A data engineer also gets to work on big data, compiles reports on it, and send it to data scientists for analysis.

A data scientist uses advanced techniques, such as neural networks, decision trees, clustering, and more, to gain business insights. They are usually the most senior team member and will have deep expertise in statistics, machine learning, and data handling. Data scientists develop actionable business insights after receiving inputs from the data analyst and data engineer.

A data analyst is an entry-level role in the data analytics team. This role demands skill at transmitting numeric data into an easy-to-understand format for every stakeholder. Further, a data analyst must be proficient in other areas, including programming languages such as Python, tools like Excel, and the fundamentals of data handling, reporting, and modeling. After gaining enough experience, you can progress to become a data engineer or a data scientist.

In short, the difference between these three roles is the level of specialization and focus on data.

What Does a Data Engineer Do?

Data engineers design systems that unify data and help you navigate it. They bridge the gap between raw data and actionable insights, making them a vital component in data-driven decision-making.

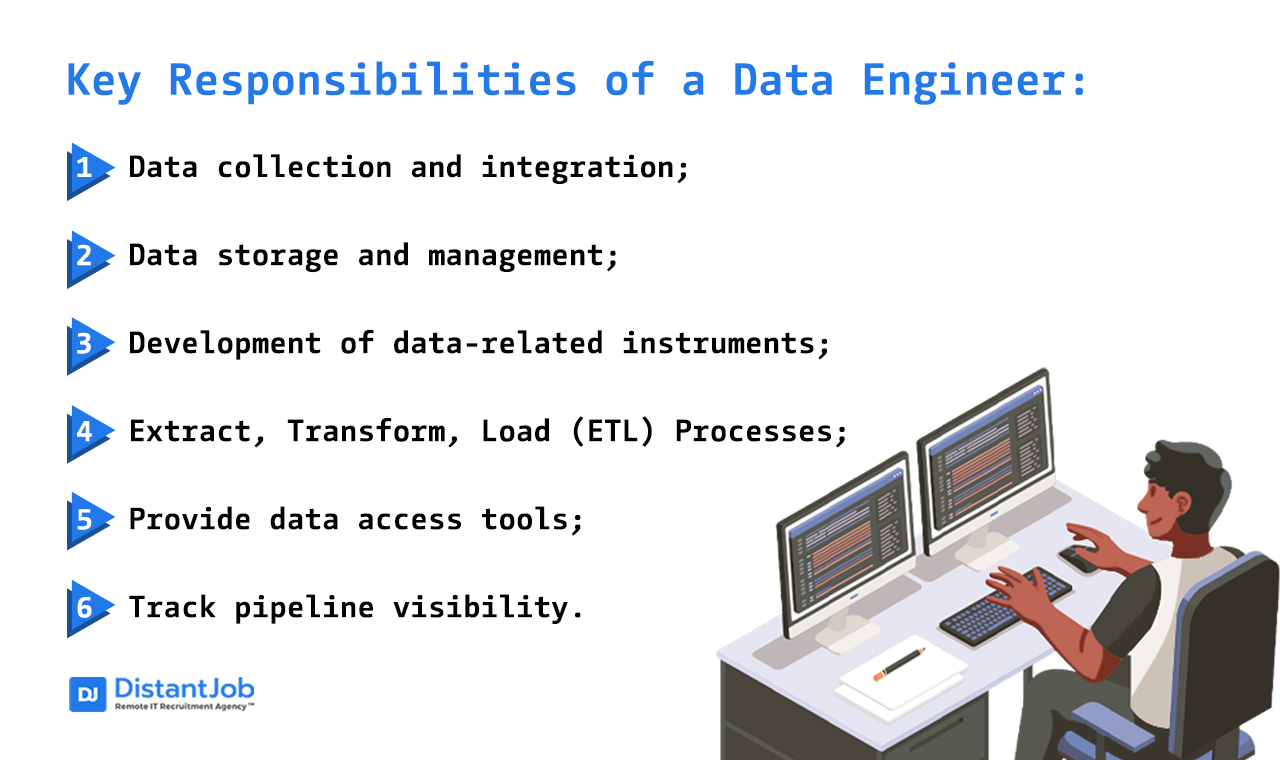

Here are some key responsibilities a data engineer performs within the data analytics team.

Key Responsibilities

1. Data collection and integration

Data engineers gather information from diverse sources, such as APIs, databases, external data providers, and streaming sources. To ensure a smooth flow of information into the data warehouse or storage system, they must design and implement efficient data pipelines.

2. Data storage and management

After data has been collected, data engineers are tasked with its storage and management. This requires them to choose the right database systems, optimize data schemes, and ensure data quality and integrity. Scalability and ability to handle large volumes of data are also important factors.

3. Development of data-related instruments

Since data engineers are first developers, they use their programming skills to build, customize, and manage integration tools, warehouses, databases, and analytical systems.

4. Extract, Transform, Load (ETL) Processes

Data engineers build ETL pipelines to convert raw data into a suitable format for analysis. They then cleanse, aggregate, and enrich the data to ensure its usefulness for data scientists and analysts.

5. Provide data access tools

Data engineers may take up the role of BI developer to set up tools to view data, generate reports, or create visuals.

6. Track pipeline visibility

As long as the warehouse needs to be cleansed from time to time, it is crucial to monitor the system’s performance and stability. Data engineers also monitor and modify the automated parts of a pipeline since data/models/requirements can change.

Tools and Technologies Used by Data Engineers

Data engineers use various tools to design, develop, and maintain data pipelines. Some of the most popular ones include:

- Python: A popular, general-purpose programming language that is easy to learn. It has become the accepted standard for data engineering.

- SQL: Data engineers use SQL (structured query language) to create business logic models, extract key performance metrics, execute complex queries, and build reasonable data structures.

- PostgreSQL: This is one of the most popular relational databases due to its active open-source community.

- MongoDB: A NoSQL database that is easy to use, highly flexible, and can store and query structured and unstructured data at a high scale.

- Apache Spark: An open-source analytics engine known for processing large-scale data. It supports multiple programming languages, including Scala, Java, R, and Python.

- Amazon Redshift: A fully managed cloud-based data warehouse designed for large-scale data storage and analysis.

- Apache Airflow: It helps to build modern data pipelines through efficient task scheduling.

What Skills Are Required in Data Engineering?

Data engineers are also considered software engineers with more skills. Below are various tools that help data engineers do their job:

Data Systems

Data engineers mainly process and manage raw data, so grasping different SQL and NoSQL data systems is super important.

Data Warehousing

A data warehouse is a digital storage facility for data for analysis and querying. Businesses use various internal and external sources for data transfer.

Understanding data warehousing enables you to design efficient database schemas and optimize queries for faster retrieval.

APIs

Application Programming Interfaces (APIs) are critical for dealing with aspects related to data integration, such as data engineering.

APIs are essential in every software engineering project. They are the link between applications and data transportation.

Data engineering heavily depends on Representational State Transfer (REST) APIs that communicate over HTTP, thus making them an essential web-based tool asset.

ETL Tools

Extract, transform, and load (ETL) is a category of data integration technologies. Data engineers use this tool extensively to maintain data pipelines, so make sure to familiarize yourself with its technicalities.

Low-code Development

Low-code development platforms are modern technologies that are increasingly replacing traditional ETL tools. However, ETL remains a paramount process in data engineering. SAP Data Services and Informatica are some of the fundamental tools for this intent.

Programming Skills

To succeed as a data engineer, you must have advanced Python, R, C#, C++, and Java programming skills.

Machine Learning

Artificial intelligence (AI), machine learning (ML), and big data are closely related. Fundamental knowledge of these technologies will help you collaborate better with data scientists and other members of the engineering team.

Analytical Skills

Data engineers will eventually face various operational and data-related challenges. They will also need to partner with data analysts to design and develop prediction algorithms and data functions. With efficient analytical skills, you can handle these tasks.

Communication Skills

As a data engineer, you will have to work with many different teams. For example, engineering teams, networking teams, design teams, etc. You may also need to communicate with clients and other external stakeholders to share data updates.

This makes the ability to pass information verbally and non-verbally an essential skill for a data engineer.

Are Certifications Necessary for Data Engineers?

Data engineers are in high demand, so if you have a portfolio of work and can demonstrate your skills, you can land a job. However, having some credentials can increase your chances. A data engineer certification proves you have invested time and effort to acquire new skills. It also verifies that your knowledge is current and relevant.

Below is a list of highly-rated data engineer certifications:

Google Certified Professional Data Engineer

The Google Certified Professional Data Engineer is a widely recognized industry certification. It aims to verify your capacity to use Google Cloud technologies. Gaining this certification may give you an advantage during the hiring process. However, ensure you have experience with Google Cloud platforms to get the best out of this program.

AWS Certified Big Data — Specialty

This specialty course from Amazon Web Services (AWS) is perfect for professionals with experience in AWS technologies to build and implement big data solutions. The AWS Certified Big Data is ideal if you wish to develop your skills in managing and analyzing data on the most adopted cloud platform in the world.

IBM Introduction to Big Data with Spark and Hadoop

This course introduces you to the concepts and practices of big data. You will get familiar with the features, benefits, and limitations of big data and explore some of its processing tools. You will learn how Hadoop, Hive, and Spark can help organizations solve big data problems and enjoy the benefits of their acquisition.

TDWI Data Modeling

TDWI’s Data Modeling course offers data modelers techniques for developing sustainable normalized and dimensional data warehouse and data mart models on relational DBMS platforms.

The course adopts a top-down approach, beginning with a business view that shows the enterprise’s major subject areas and domains. The models at this level are then transformed into logical models and, finally, into physical models.

Conclusion

Data engineering involves data gathering, data curation, and data collection. These tasks help different business sizes keep track of their performances.

Data engineers play a critical role in managing, optimizing, retrieving, storing, and distributing data that is needed to keep companies running and keeping track of performance.

If you need skilled data engineers who can become integral members of your team, DistantJob can help. We are a remote recruitment agency that connects IT professionals to the right companies.

Contact us today to start building your dream team of data engineers.