Most teams say they practice Test-Driven Development. Few can show you a session where a test was written first, watched fail, then made to pass with the smallest change that worked.

That gap matters. The value of TDD comes from the discipline itself, not from the test files it leaves behind. A repo full of tests written after the code is not the same artefact, and it doesn’t produce the same code.

This guide covers what TDD actually is and how the Red-Green-Refactor cycle runs in practice. It looks at what the research says about defect rates, where TDD pays off and where it doesn’t, and how the practice is changing now that AI assistants write a large share of the code being tested.

What is Test-Driven Development?

Test-driven development is a way of writing software where the test comes first and the code comes second. Kent Beck rediscovered and named the practice in the early days of Extreme Programming, and the goal he gave it was modest: “clean code that works.”

The cycle is called Red-Green-Refactor. Industry studies at Microsoft and IBM reported defect reductions of 60 to 90% on TDD projects compared to similar non-TDD projects.

Instead of writing the code and then testing it, you write the test before and the code after. You ensure quality and clarity from the beginning.

That sequence sounds trivial, but it forces a different order of thinking: you describe the behaviour you want before you decide how to build it.. It’s not just about finding bugs, but focusing on design and code quality, ensuring that it’s simple, functional, and easy to maintain.

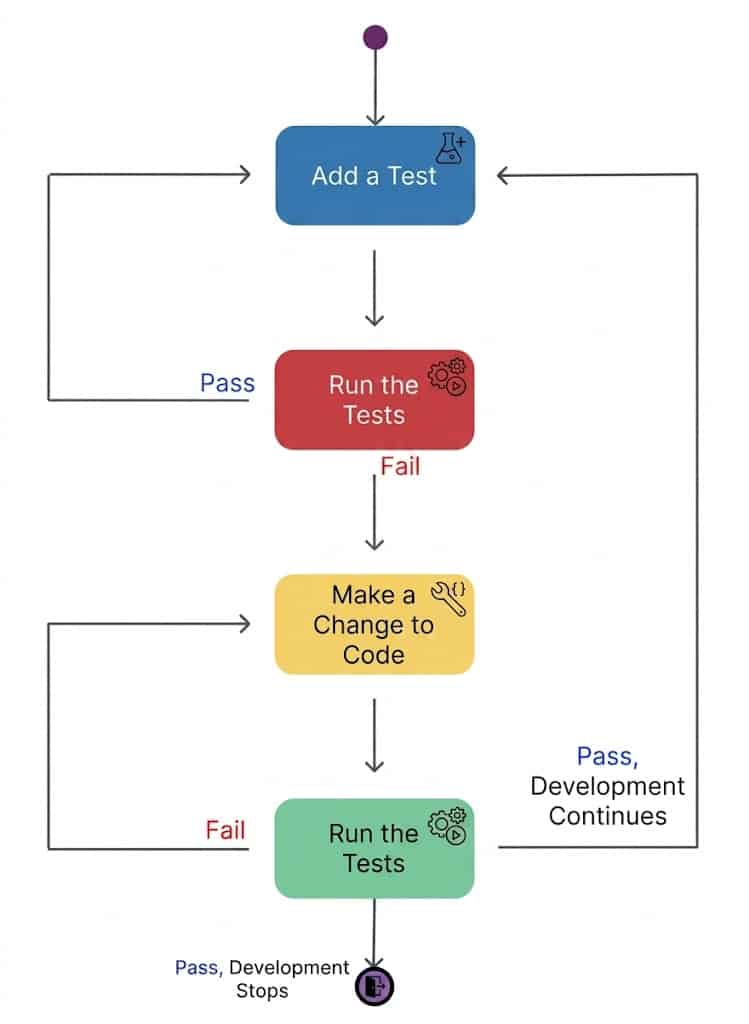

The Red-Green-Refactor Cycle: TDD’s Core

The TDD cycle has three phases. Each one has a different job, and skipping any of them is the most common reason TDD fails inside a team.

1. 🔴 Red Phase

Pick the smallest piece of behaviour you can describe. Write a test for it. Run it. Watch it fail.

The failure matters. It rules out the case where a broken test would pass no matter what, and it confirms the test harness is actually running the file you think it’s running. If a brand-new test goes green on the first run, something is wrong. Usually, it’s that the test isn’t being picked up at all.

For example, starting a cart-total feature is a test that asserts an empty cart returns a total of zero. That test fails because Cart and total() do not exist yet.

2. 🟢 Green: Make It Pass with the Smallest Change

Write the minimum code that turns the test green. The code doesn’t need to be elegant.

In this phase, do not worry about elegance. If the code should return a hard-coded 0.0 from total(), this is enough to pass the empty-cart test, return 0.0. Beck calls this “Fake It” and he means it. The point is to get back to a known-good state quickly so you have a safe baseline for the next test.

If the implementation looks too straightforward, you can also use what Beck calls “Obvious Implementation” and just type the real code. The rule is the rhythm: as soon as a test surprises you with an unexpected failure, slow down and go back to faking values until the path is clear again.

3. 🔵 Refactor Phase: Improve the Code Without Changing Behaviour

With the test green, you can change the code’s shape without changing what it does. Rename a method. Extract a function. Pull a duplicated condition into one place. Run the tests after each change.

This is the step most teams skip, and it is also where most of the design value of TDD lives. The test protects the feature’s behaviour and lets you change code you would otherwise leave alone.

Red, Green, Refactor at a glance

| Phase | Goal | What you do | What you end with |

| Red | Specify the next behaviour | Write the smallest possible failing test | A test that fails for the right reason |

| Green | Make it work | Write the minimum code to pass | A passing test, possibly ugly code |

| Refactor | Make it clean | Remove duplication, rename, restructure | The same passing test, better code |

A full TDD session is dozens of these loops in an hour. The cycle is meant to be fast.

Why You Should Use TDD

Changing your mindset to TDD brings clear benefits to your daily life as a developer. You get an immediate feedback loop, instead of discovering glitches in a QA session weeks later. TDD reduces cognitive load, allowing developers to focus on one small, manageable problem at a time rather than juggling the entire system’s complexity.

1. Bug Prevention

Since you built a safety net of tests, you can change code months later with the confidence that if something breaks, the tests will immediately warn you. And because bugs are caught immediately, debugging time is slashed.

2. Cleaner Software Design

Writing tests first serves as a design review, leading developers to create modular, loosely coupled, and highly cohesive code. You cannot write a test for a class that is impossible to instantiate or has eight hidden dependencies. Writing the test first surfaces those problems before they are baked in.

Moreover, TDD only lets you add code in response to a failing test. Methods that “might be useful later” never get written, because no test demands them. Codebases built this way tend to be smaller and have fewer dead branches.

3. Living Technical Documentation

The resulting test suite acts as a specification that shows exactly how the system is intended to behave, aiding in developer onboarding.

4. Long-Term Bug Reduction

Although it may seem like you spend more time writing tests initially, you save a lot of time that would otherwise be spent on endless debugging sessions.

Don’t Write Tests Like Production Code

Teams new to TDD often commit this mistake. They treat the test suite like production code and apply DRY (Don’t Repeat Yourself) aggressively. After six months, every test calls a chain of helpers, and nobody can read a test without opening four other files.

Tests must be optimised for reading, not for writing.

The fix is to apply two principles to two different things:

- DRY to the how: Building a test database, mocking a payment gateway, and generating a valid user object. Centralise this. If the auth flow changes, you just need to fix it in one place.

- DAMP (Descriptive And Meaningful Phrases) to the what: the scenario. Any developer should be able to open the test file and understand exactly what is being tested without leaving that screen.

| Principle | Use it for | Avoid it for |

| DRY | Test fixtures, factories, mock setup, environment plumbing | Hiding the actual scenario behind shared methods |

| DAMP | The arrange/act/assert body, scenario-specific data | Repeating the database setup in every single test |

A good test reads like a short story about one situation. A test that reads like a Rube Goldberg machine is over-DRYed.

Practical Example

An “Over-DRYed” (Bad) Test in JavaScript:

test('should process purchase', () => {

const setup = helper.createStandardUserAndCart(); // What's in this cart? Which products?

const result = service.process(setup.user);

expect(result.status).toBe(200); // What defines success here?

});

A better DAMP Test would be:

test('should apply 10% discount for purchases over $100', () => {

const user = userFactory.create();

const cart = [

{ item: 'Book', price: 120.00 }

];

const total = service.calculateTotal(user, cart);

expect(total).toBe(108.00); // 120 - 10%

});Treat your production code like a precision machine (DRY), but treat your tests like the living documentation of your system (DAMP). A test should not be a puzzle.

Does TDD actually reduce defects?

Yes, with caveats. As stated before, industry case studies at Microsoft and IBM reported defect-rate reductions of 60 to 90 per cent on TDD projects compared to similar projects that skipped it. Other studies in telecom and other regulated domains showed lower defect density both before and after release.

Two caveats matter:

- Student studies are weaker. Academic experiments using students sometimes show only modest improvements, and code inspections occasionally outperformed TDD in those settings. The benefit scales with the practitioner’s discipline.

- Initial velocity drops. Teams adopting TDD usually write code more slowly for the first few months. The win shows up later, in lower regression rates and lower change cost in the parts of the system that were previously fragile.

The Feedback Cycle

Test-Driven Development reverses the fear of messing with “ugly code.” Instead of avoiding the old module for fear of breaking it (which only increases technical debt), the team creates a safety net of tests.

| Situation | Without Test-Driven Development | With Test-Driven Development |

| Changes in Critical Modules | Anxiety, long manual testing, bugs in production. | Confidence, fast feedback, safe deploy. |

| Code Readability | Trial and error, Confuse Code. | Readable test cases (DAMP). |

| Maintenance Code | Increases exponentially over time. | Remains stable because the testing costs are small. |

If you have a messy room in your code that nobody wants to go into, TDD is like turning on the light and organising the path. Additionally, even if the code is complex, you know exactly when and where something stopped working.

Adding TDD to Legacy Systems

Michael Feathers defined legacy code as code without tests, and that definition is more useful than it sounds. When you cannot run a test, every change is a guess.

The path into a system like that is not “write 10,000 unit tests.” It is narrower. Trying to skip steps 1 and 2 is the most common reason TDD adoption fails on legacy systems.

- Write characterisation tests. Pin down what the code currently does, not what it should do. Call the function, observe the actual output, write an assertion that locks that output in. [36] You now have a baseline that catches accidental changes.

- Find a seam. A seam is a place where you can change behaviour without editing the surrounding code. Dependency injection is the most common one, but inheritance and link-time substitution also count. You usually need a seam before you can write a real test in isolation.

- Use approval testing for messy outputs. When a function produces a 400-line report, asserting field by field is hopeless. Snapshot the whole output, diff it on every change, approve or reject.

- Only then start TDD on the new behavior. Once a module has a baseline and a seam, new features inside it can be done test-first.

TDD in CI/CD: Where the Tests Run

In a modern pipeline, tests are layered by speed.

- Local / pre-commit. Fast unit tests run on every save or before every push. Hooks block commits that break them.

- CI build. The full unit suite runs on the server on every push, in a clean environment (GitHub Actions, Jenkins, etc.).

- Integration stage. Contract tests check how services talk to each other.

- Acceptance stage. ATDD-level checks against the agreed acceptance criteria before promotion to production.

The DORA metrics (deployment frequency, lead time, change failure rate, mean time to recover) are the cleanest way to see whether TDD is paying off in a CI/CD context. Lower change failure is usually the first signal.

TDD with AI Coding Assistants

AI-assistants do not make TDD obsolete. If anything, they make it more useful, for one reason: a test is a precise specification, and precise specifications are exactly what AI tools need in Spec-Driven Development.

Here’s the workflow:

- The engineer writes the failing test by hand, including the edge cases they care about.

- The AI assistant generates output aiming to pass the test.

- The test runs. If it passes, the engineer reviews (to see if AI hadn’t cheated) and refactors. If it fails, the AI tries again.

The test is the contract. Without it, AI-generated code drifts toward whatever the model thinks “looks right,” which is not the same as correct. With it, the loop is bounded: the code either passes the test or does not.

This is also a hedge against a real risk. As AI-generated code makes up a larger share of commits, the cost of a missing regression suite climbs sharply. Reviewers cannot manually verify everything. TDD gives you a check that does not depend on a human reading every line.

Conclusion

TDD builds certainty. It allows you to design behaviour. You stop being a firefighter and start being an architect. The Red-Green-Refactor cycle isn’t just a workflow; it’s a discipline that ensures your code’s purpose and every feature has guardrails.

As AI continues to flood repositories with plausible-looking code, TDD is becoming the most valuable skill in a developer’s toolkit. It is the only way to ensure that the machines are working for you, rather than the other way around.

At DistantJob, we specialise in finding world-class remote developers who don’t just write code; they build whole systems. Whether you need TDD experts, architectural veterans, or specialists in the latest AI-driven workflows, we headhunt the top 1% of global hidden talent to fit your culture.

Hire a Developer Who Gets It – Start Your Search with DistantJob Today

FAQ

For the first few weeks, yes. Most teams report a measurable productivity dip while the rhythm is unfamiliar. After that, total cycle time usually evens out or improves, because the time saved on debugging and regressions outweighs the extra time spent writing tests up front.

Unit testing is a kind of test. TDD is a process for writing code. You can do unit testing without TDD by writing tests after the implementation, and most codebases do exactly that. TDD specifically requires the test to come first and to fail before any production code is written.

Use both. TDD is for unit-level behaviour in the developer’s own language. BDD is for system or feature-level behaviour described in a format that non-developers can read. They operate at different levels, and most mature teams run them in parallel.

Partially. Deterministic helper code (feature engineering, preprocessing, metric calculation) is a strong fit for TDD. Model training and evaluation are less suited, because the output is statistical, not exact. For those, characterisation tests and tolerance-based assertions tend to work better than strict TDD.

No. High coverage is a side effect of TDD, not its goal. The goal is to design feedback and a regression safety net. Chasing coverage as a number leads to tests that exercise code without checking behaviour, which is worse than no test at all.

Yes, and it is one of the better ways to use them. Write the failing test yourself, let the assistant draft the implementation, and use the test as the pass/fail check. This keeps the AI’s output bounded and verifiable.

Skipping the refactor step. Teams move from Red to Green, ship, and never restructure. After a few months, the codebase looks like any other, the tests are brittle, and people quietly conclude TDD does not work. The refactor step is where most of the design value lives.