Hiring a senior MATLAB Engineer is an exercise in identifying a professional who can operate at the intersection of high-level mathematics and low-level hardware constraints. It requires a hiring manager who can look past the “MATLAB” label and identify the core functional expert: the MBD strategist, the Controls visionary, or the Embedded optimization specialist. To find the right fit, you must prepare a targeted list of MATLAB engineer interview questions.

The U.S. Bureau of Labor Statistics does not recognize “MATLAB Engineer” as a Standard Occupational Classification (SOC) code. It is a market title; a practical label that has emerged from job postings to describe a cluster of highly specialized engineering roles that share one defining characteristic: MATLAB and Simulink. They are not merely familiar tools, but the primary professional environment in which the engineer designs, analyzes, and validates complex systems.

When you see this title on a résumé or post it on a job board, you are almost certainly recruiting for one or more of the following mapped roles:

This distinction matters for two reasons. First, compensation benchmarking: these mapped titles have distinct market rates and total compensation structures. A Controls Engineer with automotive ISO 26262 experience typically commands a different salary band than an Algorithm Engineer working on signal processing research. Consequently, your MATLAB engineer interview questions must pivot from syntax to system architecture.

Second, it clarifies the screening filter: MATLAB fluency alone is necessary but not sufficient. You are hiring for deep systems thinking, familiarity with real-time constraints, and in many cases, a safety-critical engineering discipline.

MATLAB fluency alone is a commodity skill at the undergraduate level. What separates senior talent is the ability to architect a complete model-based design flow: from requirements to generated, qualified production code.

For the remainder of this guide, “MATLAB Engineer” is used as a convenient umbrella term. However, this handbook covers MATLAB; you will need to create proper questions for each role description yourself.

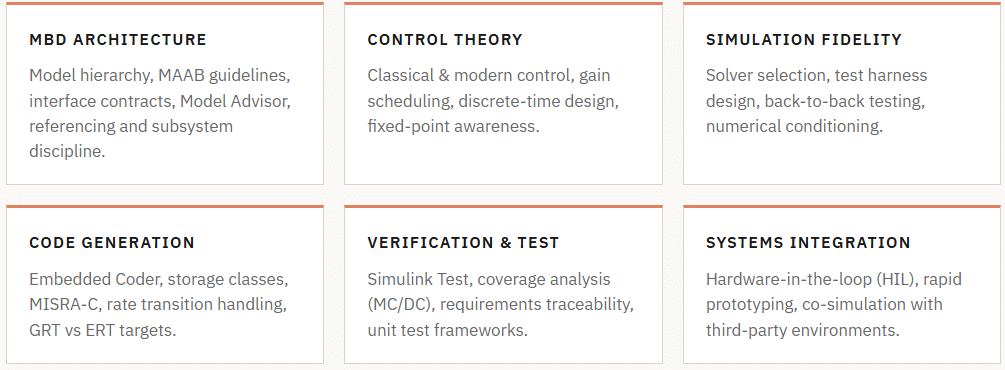

Senior-level MATLAB engineers are expected to operate across the full design lifecycle. The following competency domains apply broadly, though the relative weight shifts by sub-role (for example, a Controls Engineer will be weighted heavily toward classical and modern control theory, while an Algorithm Engineer’s weight shifts toward mathematical signal processing and numerical methods).

When developing your MATLAB engineer interview questions, ensure you cover these five critical domains:

Senior engineers must master MBD as an architecture for translating requirements into executable, verifiable specifications and production-quality, traceable C/C++ code. Skills here include architecting models for large teams, managing hierarchies, defining clear interfaces, and enforcing modeling standards (e.g., MAAB, dSPACE TargetLink) using a linter such as Model Advisor.

Fluency is required in both classical (PID, Bode) and modern (LQR, Kalman filter) control. Senior roles demand expertise in nonlinear strategies, gain scheduling, anti-windup, and discrete-time implementation, considering effects like Tustin approximation, zero-order hold, and fixed-point word-length constraints.

Beyond control, senior roles require strong algorithm design (estimation, signal processing, fault detection, ML inference). Engineers must analyze performance regarding computational complexity, numerical stability (conditioning, round-off error), and real-time feasibility. Profiling, vectorization, and memory layout awareness are critical for embedded deployment.

A paper accepts everything, and a simulation, almost everything. Therefore, senior engineers must critically assess simulation trustworthiness. They need to understand how solver selection impacts fidelity and speed, construct meaningful test harnesses with disturbance models, and systematically validate results against hardware using back-to-back testing and statistical coverage analysis.

Deep knowledge of Simulink Coder/Embedded Coder is essential, including GRT vs. ERT targets, configuring data interfaces and storage classes for integration with legacy code, managing multi-rate transitions, and critically evaluating generated C code for MISRA-C adherence. Competency goes beyond clicking “Generate Code” to diagnosing issues like uninitialized data or non-deterministic execution.

The MATLAB/Simulink ecosystem contains over 100 toolboxes and blocksets. While the former section we validated focused on concepts, this one focuses on instrumentation. At the senior level, a MATLAB engineer is not expected to know all 100+ toolboxes, but rather to have a deep understanding of those supporting the production flow.

Use this table as a screening checklist to help formulate specific MATLAB engineer interview questions:

| Toolbox / Blockset | Common Name | What to Screen For | Relevant Sub-Role |

| Stateflow | State Machine Designer | Hierarchical states, junction logic, temporal operators, Mealy vs. Moore semantics, truth tables. Ask about debugging non-deterministic transitions. | Controls Embedded |

| Simulink Coder / Embedded Coder | Autocoder | Storage classes, custom targets, ERT vs. GRT, inline parameters, data interface configuration, and post-processing hooks. MISRA compliance workflow. | Embedded MBD |

| Fixed-Point Designer | Fixed-Point Toolbox | Word length selection, scaling strategy (binary-point vs. slope/bias), overflow handling, range analysis, conversion from floating-point. Ask about precision loss in feedback paths. | Embedded Algorithm |

| Simscape / Simscape Multibody | Physical Modeling | Domain-specific networks (mechanical, electrical, hydraulic, thermal), acausal modeling philosophy, solver coupling to Simulink, 1D vs. 3D plant models. | Simulation Controls |

| Control System Toolbox | Classical Controls | LTI object manipulation, frequency-domain design, pole-zero analysis, and loop shaping. Also: System Identification Toolbox for plant model identification. | Controls |

| Simulink Test | Test Manager | Test harness architecture, test sequence blocks, signal editor, baseline comparison, coverage aggregation, and integration with Simulink Requirements. | MBD Embedded |

| Polyspace | Static Analyzer | Code Prover vs. Bug Finder distinction, justification workflow, integration into CI/CD, MISRA checker, and IEC/ISO qualification kit usage. | Embedded |

| Robotics System Toolbox / ROS | Robotics / Autonomy | ROS 2 integration, sensor fusion, SLAM, co-simulation with Gazebo, and path planning algorithms. Relevant for autonomy and robotics sub-roles. | Algorithm RandD |

| Deep Learning Toolbox | DL/ML Integration | Deploying trained networks to Simulink via predict blocks, code generation for neural networks, and quantization-aware training for embedded targets. | Algorithm RandD |

| Simulink Requirements | Requirements Traceability | Bidirectional linking of model elements to requirements, coverage reporting per requirement, and integration with external requirements tools (DOORS, Jama). | MBD |

S-Functions enable embedding custom algorithms (C, C++, MATLAB) into Simulink simulations. Senior engineers know when to use Level-2 MATLAB versus C MEX S-Functions, and critically, how to write them for code generation compatibility, avoiding simulation-only logic that fails silently in generated code. For senior/lead roles, discuss using the S-Function Builder versus manual creation, and the System Object as a modern alternative.

Senior MATLAB engineers must integrate MATLAB/Simulink with other systems, as production environments are rarely pure. Essential integration patterns include: calling C/C++ libraries via MEX files; co-simulating with FMI/FMU models; using the MATLAB Engine API for C++/Python hosts; and interfacing with Python data science tools (Pandas, NumPy, PyTorch). The senior-level differentiator is cleanly managing these integration boundaries to maintain build integrity and determinism.

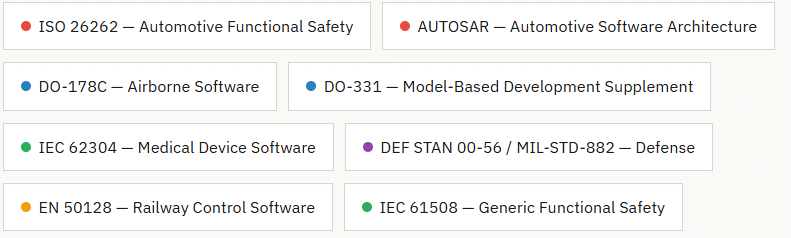

MATLAB and Simulink are prevalent in safety-critical industries where engineering must be conducted within rigorously defined regulatory and process frameworks. A senior engineer who cannot speak credibly about the applicable standard in their industry vertical is, from a practical standpoint, only partially qualified for the role. The following are the primary standards for ecosystems you should screen for:

Important: Do not assume that a candidate who lists a standard on their résumé has deep process knowledge. It is common for engineers to have worked on a project governed by ISO 26262, for example, without personally owning any of the compliance artifacts. Probe with specifics: “Tell me about an audit or review you participated in,” or “What was your personal responsibility in the safety case documentation?”

Essential for automotive MATLAB roles. Senior candidates need ASIL decomposition (A-D rigor), safety artifacts (FMEA, FTA, HAZOP), “freedom from interference,” and knowledge of MathWorks DO Qualification Kit/Automotive Safety Toolbox. Ensure Simulink models meet ASIL-B via MAAB, Model Advisor, traceability, coverage (MC/DC for C/D), and static analysis (Polyspace).

Defines objectives for aerospace software (DAL A-E). DO-331 specifically covers Model-Based Development. Seniors must distinguish “model as design” vs. “model as source code substitute” and know the MathWorks DO Qualification Kit for Embedded Coder. Experience is rare and highly valued.

Universal architecture standard in Tier-1 automotive. Production ECU software engineers must understand AUTOSAR component modeling in Simulink, the SWC/BSW/RTE distinction, and Simulink code integration (via .arxml). Lack of AUTOSAR knowledge disqualifies candidates for production MBD roles.

Focuses on software safety class (A, B, C) and testing. MATLAB is often used for prototyping, but production transition requires qualified tools and a documented lifecycle. Candidates should have experience with design history files, traceability matrices, and ISO 14971 risk management.

The questions below are organized by technical domain and serve as your MATLAB engineer interview questions. Each includes a brief note on what a strong answer typically contains and what a weak answer looks like. Questions are designed for senior-level candidates (5+ years of professional MATLAB/Simulink experience). For lead-level roles, combine these with the architectural and leadership questions in Section VI.

You are inheriting a Simulink model that has grown to over 5,000 blocks across a single layer. The team reports that compile time is exceeding 20 minutes, and code review is nearly impossible. Walk me through your architectural refactoring approach.

Follow-up probes: How do you decide between Model Reference and Subsystem Reference? How would you handle shared data between refactored units? What Model Advisor checks would you run first?

Explain the difference between variable-step and fixed-step solvers in Simulink, and describe a scenario where using a variable-step solver in HIL or production code generation would be inappropriate. How would you detect if a team member had made that mistake?

Strong answers will mention determinism requirements, the sample time propagation implications, and specific diagnostic steps such as checking solver settings in configuration sets and reviewing build log warnings about continuous-time blocks.

You need to wrap a third-party C library for mechanical simulation into a Simulink model that will ultimately generate production ECU code. Describe how you would implement this, what constraints exist on the library interface, and what testing you would perform to validate the integration.

Look for understanding of the MEX S-Function wrapper pattern, code generation compatibility (no dynamic memory allocation, no OS calls, no global mutable state), and the use of Processor-in-the-Loop (PIL) testing to validate numerical equivalence of the generated code.

Your model has a 1 ms control loop that reads from a sensor running at 10 ms. How do you handle this rate mismatch in Simulink, and what are the implications for code generation if you do nothing versus using explicit Rate Transition blocks?

A strong answer covers deterministic vs. non-deterministic rate transition blocks, the difference between protected and unprotected transitions, and how Rate Transition affects data integrity in a pre-emptive RTOS environment.

You have a continuous-time PID controller with integral action designed in the s-domain. When you discretize it using Tustin (bilinear) approximation at a 10 ms sample rate and implement it on hardware, you observe unexpected steady-state error under rapid setpoint changes. What are three candidate root causes, and how would you systematically isolate each?

Strong answers include: anti-windup not migrated correctly during discretization; frequency warping effect of Tustin approximation near Nyquist affecting the integral term; or derivative kick. The diagnostic process should include comparison of continuous and discrete step responses at multiple setpoints using Simulink test harnesses.

Walk me through your process for converting a floating-point Kalman filter implementation to fixed-point for a microcontroller with no FPU. What are the highest-risk numerical operations, and how do you verify that precision loss does not exceed acceptable bounds?

Look for discussion of covariance matrix positive-definiteness under fixed-point rounding, the use of Fixed-Point Designer’s range analysis and data type override workflow, and numerical simulation comparison between floating-point and fixed-point outputs across a representative test dataset.

Describe the implementation pattern you use for a gain-scheduled controller in Simulink and how you ensure bumpless transfer between operating points. What is the risk of using interpolated lookup tables for gain scheduling versus switching between discrete operating point controllers, and how do you validate coverage across the scheduling envelope?

Controllers with gain scheduling use Simulink’s 1D/2D Lookup Table or Gain Scheduling blocks to interpolate gains based on state variables (e.g., speed), ensuring bumpless transfer via state reset or filtering. The main risk is local instability between tabulated points, requiring rigorous validation with Simulink Design Verifier or Monte Carlo simulations to cover the operating envelope and guarantee phase margin.

How to implement an Extended Kalman Filter (EKF) on limited hardware for real-time? Optimize the code (vectorization vs. loops) and monitor numerical stability metrics, such as matrix conditioning, to avoid fixed-point rounding errors.

The development balances mathematical complexity and embedded feasibility, focusing on vectorization (SIMD) and numerical analysis to avoid filter divergence. Validation prioritizes profiling and residual error analysis to ensure accuracy within the CPU budget.

How would you approach implementing an extended Kalman filter (EKF) for state estimation on a resource-constrained microcontroller while ensuring numerical stability and real-time efficiency?

A senior MATLAB engineer should discuss Cholesky factorization (or Square Root Filtering) to maintain a positive definite covariance matrix, as well as vectorization and precision (single/double). The explanation should cover the O(n³) complexity of the inversion, dynamic allocation minimization, and code profiling to meet the control loop time budget.

How to optimize a real-time fault detection algorithm (moving windows) on a microcontroller with memory/CPU constraints? Explain the influence of vectorization and numerical stability (matrix conditioning) on the choice between fixed-point and floating-point. What profiling metrics would validate the algorithm’s latency?

Ideal solutions address the use of Software-in-the-Loop (SIL) to measure execution time, replacing expensive divisions with inverse multiplications, and ensuring that matrix operations avoid the accumulation of rounding errors that could lead to false positives in detection.

To validate a high-fidelity simulation model used in safety certification: How to ensure that simplifications and disturbance models do not mask instabilities in the physical system? How to structure back-to-back tests to quantify the correlation between simulated and experimental data?

The simulation is reliable due to the rigorous selection of the solver (adjusted tolerances and steps) and the construction of test harnesses with uncertainties. Senior verification uses statistical analysis (correlation and model coverage) to ensure that the virtual environment faithfully represents the worst-case hardware scenarios.

When developing a Digital Twin to validate a fault protection system, how do you quantify the model’s fidelity and ensure that the simulation results are representative of the actual hardware behavior?

The focus should be on back-to-back testing (MIL vs. SIL vs. PIL) and statistical correlation analysis between real telemetry and model outputs. It is crucial to build test harnesses with sensor error models (white noise, bias, drift) and evaluate the solver’s sensitivity (fixed vs. variable step) to avoid masking high-frequency transient phenomena due to truncation errors or inadequate worst-case scenario coverage.

When building a test harness for a critical system, how do you distinguish numerical instability (solver) from logical error (algorithm)? Detail your methods for correlating the fidelity of the simulation with real data, using statistical coverage and back-to-back tests to ensure that the model faithfully represents environmental disturbances.

The candidate is expected to discuss reducing the solver’s step size to isolate numerical artifacts, implementing stochastic disturbance models, and using Simulink Test to automate the quantitative comparison between simulation results and hardware telemetry logs (numerical equivalence).

After generating code from a Simulink model, a peer code review flags that the generated output contains a global variable that is written in two different tasks without a mutex, creating a potential race condition. How did this arise from the model, and how do you fix it at the model level rather than patching the generated code by hand?

This tests understanding that manual edits to generated code are almost always an anti-pattern in a proper MBD flow, and that the correct fix is to reconfigure the rate transition block for data integrity protection or restructure the model to eliminate the shared state.

Your Embedded Coder configuration claims MISRA-C:2012 compliance, but Polyspace Code Prover is reporting a potential division-by-zero violation in the generated code. The denominator is a lookup table output that you believe is always positive at runtime. What is the correct way to resolve this without simply annotating the finding as “justified”?

Strong answers discuss adding a guard in the Simulink model using a saturation block or a conditional block with a minimum clamp, re-generating the code, and re-running Polyspace to confirm the orange check is resolved to green. Also worth discussing: using Polyspace’s range specifications to communicate known invariants to the analyzer.

Your team wants to use a Python-based machine learning model as a “virtual sensor” component within a Simulink real-time simulation. What are the two or three main architectural patterns for achieving this, and what are the performance and deployment implications of each?

Patterns to discuss: (1) offline: Python generates lookup tables or a MATLAB-callable trained model exported as ONNX, which then gets compiled into C via Deep Learning Toolbox; (2) co-process: Simulink calls out to a Python subprocess via TCP or shared memory, suitable only for software-in-the-loop, not HIL; (3) Python generates C/C++ code (e.g., via scikit-learn’s sklearn-porter), which is then wrapped as an S-Function. Ask which they have actually done.

For senior and lead-level candidates, behavioral and situational questions are essential for assessing the skills that do not show up in a coding test or whiteboard session: project leadership under ambiguity, the ability to push back on incorrect decisions, mentorship quality, and the discipline to maintain rigor under schedule pressure. Use the STAR (Situation, Task, Action, Result) framing when probing these questions.

Tell me about the most difficult simulation bug you have ever debugged — one where the model ran without errors but produced physically incorrect results. How did you approach diagnosis, and how long did it take to identify the root cause?

Strong answers will describe a systematic process: isolating subsystems, building reduced reproduction models, comparing outputs with hand calculations or reference implementations, using Simulink’s signal logging and the Simulation Data Inspector for post-analysis. Watch for candidates who describe jumping immediately to heuristic adjustments without a structured hypothesis-driven process.

Describe a time when your simulation predicted one behavior and hardware testing produced a significantly different result. How did you reconcile the gap, and what modeling changes did you make?

This question probes whether the candidate treats simulation as a tool with known limitations or as an oracle. Strong candidates will describe specific modeling fidelity improvements (adding actuator dynamics, transport delays, sensor noise, or thermal effects) and explain how they quantified the acceptable residual gap.

You are three weeks from a software integration milestone. A project manager asks you to skip model-level unit testing and proceed directly to HIL testing to recover the schedule. What do you do?

This is a values and judgment question as much as a technical one. Look for candidates who can articulate the specific risk of skipping model-level testing (cost of late defect discovery, difficulty of root cause analysis in a fully integrated HIL environment), who would propose a risk-mitigated alternative rather than simply refusing, and who have done this in practice — ask for a real example.

Walk me through the most consequential architectural decision you have made on a Simulink-based project. One that you knew would be difficult to reverse. What alternatives did you evaluate? How did you build consensus? Looking back, was it the right call?

The quality of thinking around reversibility and tradeoffs is the signal here, not whether the decision was ultimately correct. Candidates who describe decisions made unilaterally without stakeholder consultation, or who cannot name specific alternatives they evaluated, are showing lead-level immaturity regardless of their technical depth.

When evaluating responses to your MATLAB engineer interview questions, watch for these behavioral patterns. Red flags do not automatically disqualify a candidate, but each warrants a targeted follow-up question before deciding to hire a MATLAB developer. Green flags are positive differentiators that justify premium offers.

When asked about generating code from a model, the candidate repeatedly expresses a preference for manually writing the equivalent C code. This reveals either a distrust of code generation tools (sometimes valid, but should be articulated with specific, reasoned concerns) or a fundamental misunderstanding of why MBD exists. For roles where autocoding is central, this is a significant cultural and productivity misalignment.

The candidate cannot clearly explain why a variable-step solver with tight tolerances would produce different results than a fixed-step solver at a coarse step size, or why this matters. This suggests they have been running simulations without critically evaluating whether the results are trustworthy — a particularly dangerous trait in safety-critical development.

The candidate has not used Git or another version control system for Simulink model files, and either does not see the value or believes model files are unmanageable in VCS. Modern workflows (slxFormat, model comparison tools) have made Simulink-in-Git straightforward. A senior engineer without VCS discipline introduces unacceptable configuration management risk in any serious product development environment.

For embedded and safety-critical roles, the candidate who “trusts Embedded Coder” completely and has never read the generated output to verify that the model’s intent was faithfully captured is a liability. Generated code must be reviewed for correctness, and this requires a willingness to engage with C output.

Every experienced MATLAB/Simulink engineer has a library of war stories involving algebraic loops, sample time inheritance bugs, zero-crossing detection misfires, or fixed-point overflow surprises. A candidate who cannot recall a single concrete example may have been operating at a superficial level, inheriting working models rather than designing from first principles.

The candidate believes that a well-structured Simulink model is self-documenting and that additional design documentation is unnecessary overhead. In any regulated industry, this attitude is immediately disqualifying. Even in unregulated contexts, it signals poor awareness of the knowledge transfer problem in engineering teams with turnover.

The MATLAB ecosystem spans too wide a range for any single engineer to have deep production experience across all toolboxes. A candidate who rates themselves 5/5 on Stateflow, Polyspace, Simscape, Deep Learning Toolbox, and Robotics System Toolbox simultaneously has almost certainly overstated their experience in at least some areas. Probe the claims systematically.

Senior engineers who have never had budget visibility may have used expensive toolboxes (Polyspace, Embedded Coder, physical modeling suites) without understanding that each carries a significant per-seat license cost. For lead-level roles where the engineer will influence toolchain decisions, this naivety can result in architecturally dependent designs that become cost-prohibitive to scale.

The candidate unprompted mentions Model Advisor scores, cyclomatic complexity of Stateflow charts, or use of automated style checkers as part of their normal development workflow — not as a response to audit pressure.

Without prompting, the candidate articulates what each testing level validates, what defects each is capable of detecting, and why all three are typically necessary in a safety-critical V-model development process.

Integrating Simulink builds, Model Advisor, code generation, and Simulink Test into Jenkins, GitHub Actions, or a similar CI system is non-trivial. Candidates who have done this independently show initiative, systems thinking, and awareness of team-scale quality infrastructure.

The willingness to critically evaluate past decisions (where they would choose differently in hindsight) correlates strongly with engineering maturity, intellectual honesty, and continued growth trajectory.

The candidate can name things they do not know well and has a clear pattern of seeking out domain specialists (plant physicists, safety engineers, hardware architects) rather than improvising in areas outside their core competence.

Use this rubric to grade the candidate’s performance across your MATLAB engineer interview questions. Each dimension is scored 1 (does not meet bar), 2 (meets bar), or 3 (exceeds bar). A hire recommendation requires a total score of ≥ 18/27, with no individual dimension below 1, and no more than two dimensions at 1. Any single dimension scored 1 by two or more independent raters is typically a disqualifying flag for senior roles.

| Dimension | Score 1 — Does not meet bar | Score 2 — Meets bar | Score 3 — Exceeds bar |

| MBD Architecture | Cannot structure a large model hierarchy; no awareness of modeling standards | Applies MAAB guidelines; understands Model Reference vs. Subsystem tradeoffs | Has designed complete MBD architectures for team-scale projects; owns model quality metrics |

| Control Theory | Limited to PID tuning; cannot explain discretization effects | Proficient in classical and modern control; understands fixed-point implications | Designs gain-scheduled or nonlinear controllers; reasons fluently about stability margins under uncertainty |

| Code Generation | Treats generated code as a black box; no MISRA awareness | Configures Embedded Coder for target integration; understands storage classes and MISRA workflow | Has integrated generated code into production ECU with qualified tool evidence; diagnoses generated code defects at the model level |

| Simulation Rigor | Cannot distinguish solver types; uses default solver settings universally | Selects appropriate solvers; designs meaningful test harnesses with representative disturbances | Defines and enforces simulation fidelity criteria; has a systematic back-to-back testing methodology |

| Industry Standards | Lists standard on résumé but cannot explain process requirements | Has personally produced compliance artifacts; understands ASIL/DAL decomposition concepts | Has owned a safety analysis deliverable; has navigated a formal audit or certification review |

| Problem-Solving | Uses heuristic adjustments; cannot describe a structured debugging process | Uses hypothesis-driven debugging; has concrete examples of systematic root cause analysis | Anticipates failure modes during design; creates reusable diagnostic infrastructure |

| Leadership & Mentorship | Individual contributor mindset only; cannot describe a mentoring experience | Has mentored junior engineers; provides technical guidance during model reviews | Has designed team-level quality infrastructure; actively builds capability in others as a strategic priority |

| Communication | Cannot translate model design decisions for non-MATLAB audiences | Communicates clearly across disciplines; adapts technical depth to the audience | Drives cross-functional alignment on complex technical decisions; writes high-quality design documentation |

| Documentation & VCS | Minimal documentation discipline; no VCS practice for models | Uses version control for all model artifacts; maintains design rationale documentation | Has established team-level documentation standards; contributes to or owns model configuration management process |

Post-interview debrief protocol: Conduct structured post-interview debriefs within 24 hours while the evidence is fresh. Recruiters submit independent scores before discussion to avoid anchoring bias. The hiring manager focuses the debrief on disagreements on the score. Unanimous scores need less discussion than divergent ones, which either signal potential question issues or genuine candidate ambiguity.